A female patient underwent a routine mammogram in her early 40s and the results revealed a complicated pattern of white patches in her breast tissue. It was difficult for even the most skilled radiologists to distinguish whether the marks were benign or cancerous. Despite the uncertainty, her medical team determined that the spots were not a cause for immediate concern. Looking back, the patient realizes that she already had cancer at the time, but it went undetected.

The patient went through a series of medical tests over the course of two years, including a second mammogram, a breast MRI, and a biopsy. However, each test produced uncertain or contradictory results, leading to a very frustrating experience for her. In the end, she was diagnosed with breast cancer in 2014, but the road to that diagnosis had been extremely exasperating for her. She could not help but question how three tests could produce three different outcomes

After receiving treatment and making a successful recovery, the patient was still disturbed by the possibility that the uncertainties of interpreting a mammogram could result in treatment delays. She became increasingly aware of the vulnerability of current medical practices, prompting her to make a significant career decision. She was determined to change the status quo, claiming, “I absolutely have to change it.”

The patient, who was a computer scientist at the Massachusetts Institute of Technology, had no prior background in the field of healthcare. Her area of expertise involved utilizing artificial intelligence for natural language processing. Nevertheless, she was seeking a new avenue for research and decided to collaborate with radiologists. Together, they sought to develop machine learning algorithms that could use computers’ advanced visual analysis capabilities to detect subtle patterns in mammograms that may be undetectable to the human eye.

For a span of four years, the patient and her team trained a computer program to analyze mammograms obtained from approximately 32,000 women of various ages and ethnicities. They provided the program with information about which women had received a diagnosis of cancer within five years of their scan. The team subsequently tested the computer’s matching capabilities in an additional 3,800 patients. The resulting algorithm – published in Radiology – was substantially more accurate in predicting the presence (or absence) of cancer than the typical clinic practices. When the team utilized the program to analyze the patient’s mammograms from 2012, which her physician had deemed normal, the algorithm correctly predicted that she was at a greater risk of developing breast cancer within five years than 98 percent of other patients.

In addition to detecting subtle details that may be invisible to the human eye, AI algorithms can elaborate new approaches to interpreting medical images that may not be comprehensible to humans. Researchers, start-up companies, and scanner manufacturers are creating AI programs with the goal of:

- Improving the accuracy and speed of diagnoses.

- Offering superior medical care in underserved areas without access to radiologists.

- Identifying novel links between biology and disease.

- Predicting an individual’s lifespan.

The implementation of AI applications in medical clinics has been swift, and physicians have responded with mixed emotions. While they recognized the technology’s potential to decrease their workload, they simultaneously appeared worried about losing their jobs to machines. The use of algorithms has also led to uncharted territory in terms of regulation, specifically concerning the constant evolution and learning capacity of the machines. In cases where an algorithm produces an incorrect diagnosis, there is a question of who bears responsibility. Despite these concerns, many physicians are optimistic about the potential benefits of AI programs, and believe that they can help improve healthcare for all.

A controversial topic

The idea of using computers to interpret radiological scans has been around for some time. In the 1990s, radiologists began employing computer-assisted diagnosis (CAD) to identify breast cancer in mammograms. The technology was considered groundbreaking, and medical facilities quickly adopted it. However, CAD was found to be more complicated to operate than existing methods. Some studies even suggest that clinics utilizing CAD made more errors than those that did not. The failure actually made many physicians dubious of computer-aided diagnostics.

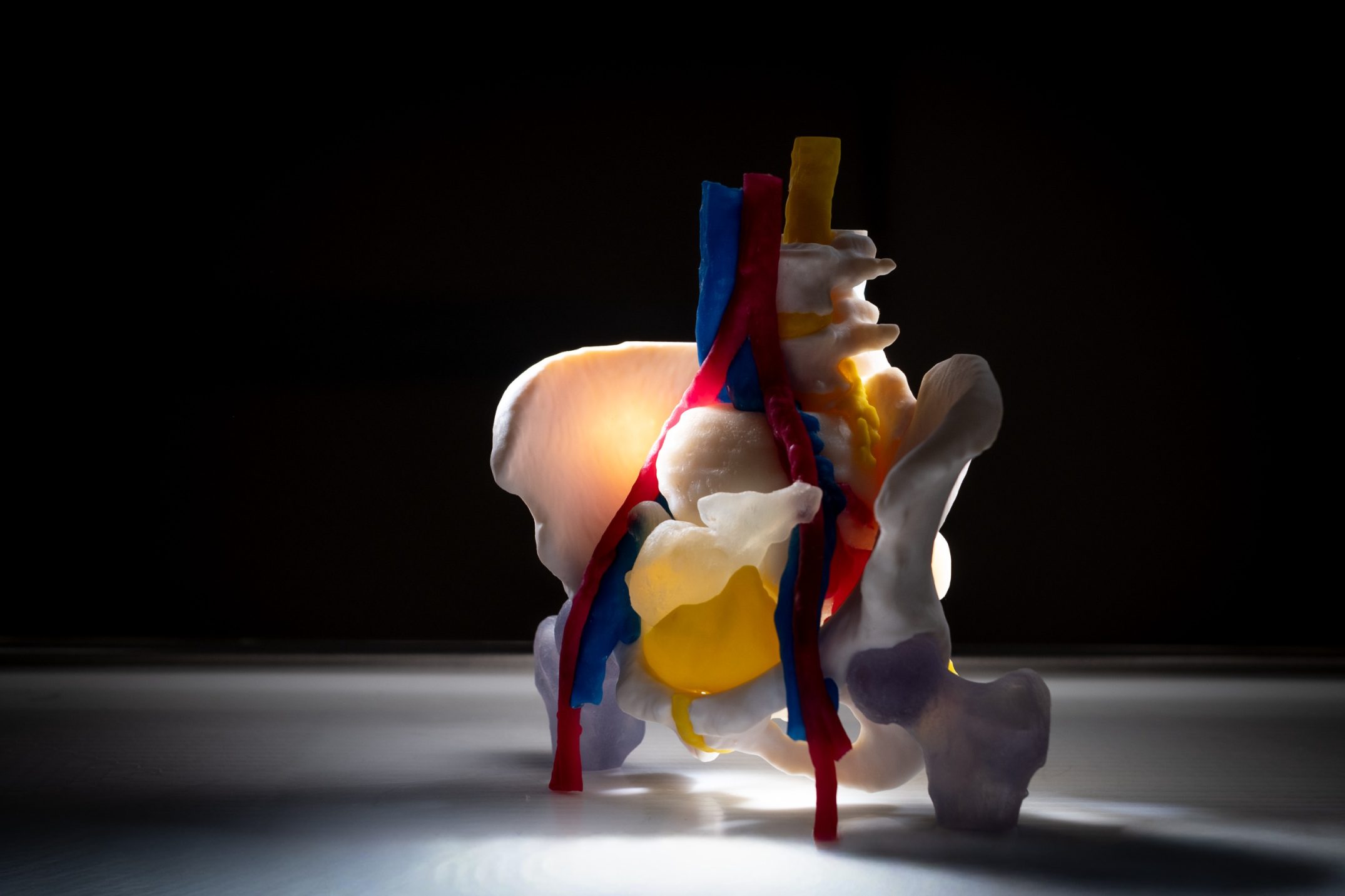

Over the last ten years, computer vision has made significant strides, not just in everyday applications such as facial recognition, but also in the field of medicine. The progress is largely attributed to the advancement of deep-learning techniques, wherein a computer is provided with a series of images and allowed to make its own connections between them, ultimately creating a network of associations. In medical imaging, this could involve informing the computer of which images contain cancer and then setting it free to identify characteristics that are common to those images but not present in images without cancer.

The use of AI technologies in radiology has rapidly developed and been widely adopted, with “AI and imaging” being a popular and current topic at every major conference.

The U.S. Food and Drug Administration (FDA) does not keep a record of approved AI products. Yet, according to a digital medicine researcher, the agency is granting approval to over one medical imaging algorithm per month. A 2018 study conducted by Reaction Data revealed that 84% of radiology clinics in the United States had already adopted or planned to adopt AI programs. China is rapidly growing in this field, with over 100 companies creating AI applications for healthcare.

The start-up Aidoc, for example, focuses on developing algorithms that examine CT scans for abnormalities and prioritize patients who require immediate attention. Aidoc also monitors how frequently doctors use the program and how much time they spend verifying its findings.

Time – and saving it – is essential when it comes to saving a patient. In fact, according to a recent study of chest x-rays for collapsed lungs, radiologists class more than 60 percent of the scans as high priority, resulting in a huge workload made of urgent and less urgent cases. GE Healthcare, for example, has embedded a set of AI tools into its scanners, which were approved by the FDA in September 2022 and automatically flag the most critical cases.

Computers can handle vast amounts of data, enabling them to carry out analytical tasks that are beyond human capacity. For instance, Google is using its computing power to develop AI algorithms that can transform two-dimensional CT images of lungs into a three-dimensional structure and assess the entire structure to detect the presence of cancer. In contrast, radiologists need to examine these images individually and reconstruct them in their minds.

Additionally, a Google algorithm can determine patients’ risk of cardiovascular disease by analyzing a scan of their retinas and detecting subtle changes linked to blood pressure, cholesterol, smoking history, and aging.

Links between biological features and patient outcomes

AI can help reveal entirely new links between biological features and patient outcomes. A study published in JAMA Network Open in 2019 showcased a deep-learning algorithm trained on over 85,000 chest x-rays from individuals who had been monitored for over 12 years in two major clinical trials. The algorithm determined each patient’s risk of dying during the 12-year period. The researchers discovered that 53 percent of the people that the AI classified as high-risk died within 12 years, compared to only 4 percent in the low-risk category. The algorithm lacked information about who died and the cause of death. According to a radiologist of Massachusetts General Hospital, the lead investigator, the algorithm could be a valuable tool for assessing patient health if combined with a physician’s evaluation and other data, such as genetics.

The researchers investigated the algorithm’s workings by identifying the image regions that it utilized for its calculations. Some regions, such as waist circumference and breast structure, were expected as they can indicate known risk factors for particular illnesses. However, the algorithm also looked at the area beneath the patients’ shoulder blades, which has no established medical significance. That flexibility might be one predictor of a shorter lifespan. When taking a chest x-ray, patients are often required to embrace the machine, and less healthy individuals who cannot wrap their arms completely around it may position their shoulders differently.

The so-called black box problem refers to the disconnect between the way computers and humans process information, creating an opaque space that is inaccessible to humans. Experts debate on whether this presents a problem in medical imaging. Nonetheless, if an algorithm consistently improves doctors’ performance and patients’ health, doctors may not need to understand how it works. For example, researchers are still unaware of the mechanisms of many drugs, such as lithium, which has been used to treat depression for decades. A digital medicine researcher at the Scripps Research Institute, questions whether machines are held to a higher standard than humans, given that medicine is already a black box.

Still, the opaque nature of the black box creates potential opportunities for misunderstandings between humans and AI. For instance, researchers at the Icahn School of Medicine at Mount Sinai were confounded by a discrepancy in their deep-learning algorithm’s performance in identifying pneumonia in lung x-rays. While the algorithm had an accuracy rate of over 90 percent on x-rays produced at Mount Sinai, it was significantly less accurate with scans from other institutions. They later discovered that the algorithm was not only analyzing the images, but also factoring in the likelihood of a positive diagnosis based on the prevalence of pneumonia at each institution. This was an unexpected outcome that the researchers did not intend or desire for the program to produce.

Some may express concerns about confounding factors that can impact the accuracy and effectiveness of AI. These factors include biased data sets used to train the algorithms, which can stem from differences in imaging circumstances such as emergency versus routine examinations, or from the way that institutions label their images. Such biases can cause the algorithm to miss key features or make incorrect assumptions about a patient’s condition, and failing to account for these factors can have serious consequences and lead to inaccurate diagnosis or treatment plans.

In this instance, the solution could be to train AI algorithms on data sets that are diverse in terms of patient populations and geographic locations, and then test the algorithm without any modifications in a new patient population. Still, very few algorithms have undergone this type of testing. Only a small number of studies claiming that AI outperforms radiologists were tested in populations that were different from the population where they were developed.

As researchers become more aware of this issue, there may be an increase in prospective studies that test algorithms in novel settings. For example, a team recently concluded testing its mammogram AI on 10,000 scans from the Karolinska Institute in Sweden and found that it performed as well there as it did in Massachusetts. The team is currently collaborating with hospitals in Taiwan and Detroit to test the AI on more diverse patient populations. They highlight that current breast cancer risk assessment standards are significantly less precise in African-American women as they were mainly developed using scans from white women.

Concerns

If AI provides an incorrect diagnosis, it can be difficult to determine who is at fault – the doctor or the program. In fact, if an AI system leads a physician to make an incorrect diagnosis, the physician may not be able to provide an explanation, and the test’s methodology data may be protected by the company as a closely guarded trade secret.

Because medical AI systems are relatively new, they have not yet been tested in medical malpractice lawsuits. Thus, it is uncertain how courts will assign liability and what level of transparency will be necessary.

Many medical algorithms are created by modifying deep-learning techniques originally designed for other forms of image analysis. Despite this, many believe that it is feasible to develop an algorithm that can provide an explanation for its decisions. However, creating a transparent algorithm from scratch is significantly more challenging than adapting a pre-existing black box algorithm for medical data analysis. As a result, we may suspect that most researchers allow an algorithm to operate and then attempt to comprehend how it came to its conclusions afterwards.

Scientists are developing transparent AI algorithms that are capable of analyzing mammograms to detect potential tumors, while continuously informing researchers about their functioning. Nevertheless, progress has been hindered by the scarcity of suitable images for algorithm training. In fact, the publicly available images are often inadequately labeled or captured using outdated equipment that is no longer in use. Without large and diverse data sets, algorithms are susceptible to acquiring extraneous factors.

Regulators face difficulties in dealing with black box algorithms and the learning capabilities of AI systems. Unlike drugs, which function consistently, machine learning algorithms evolve and enhance as they receive more patient data. Since the algorithm relies on numerous inputs, even minor modifications such as a new hospital IT system may cause significant disruptions to the AI program. Machines can become ill and susceptible to malware, similar to humans. Therefore, when a person’s life is at stake, relying on an algorithm is not trustworthy.

The FDA proposed certain guidelines to manage algorithms that develop and improve over time. One of the guidelines requires developers to monitor changes in their algorithms to ensure that they continue to function as intended. If unexpected changes occur that may need reassessment, they must inform the FDA. Additionally, the agency is working on formulating best manufacturing practices and may mandate that companies outline their expectations for algorithmic evolution, as well as a protocol for managing those changes.

Machines vs doctors, or machines and doctors?

In 2012, a technology venture capitalist alarmed a medical audience by forecasting that algorithms would replace 80 percent of doctors.

In 2015, only 86 percent of radiology resident positions in the United States were filled, as opposed to 94 percent the previous year, although these figures have improved in recent years. Furthermore, in a 2018 poll of 322 Canadian medical students, 68 percent expressed the belief that AI would diminish the need for radiologists.

Despite these concerns, most experts and AI manufacturers are skeptical that AI will replace physicians anytime soon. However, because human biology is intricate, even if an algorithm is superior at diagnosing a specific issue, incorporating it with a doctor’s expertise and comprehension of the patient’s unique history will lead to a better result.

An AI that is proficient in a single task has the potential to relieve radiologists of tedious work, enabling them to spend more time engaging with patients.

Despite this, many experts predict that the tools and training provided to radiologists (including their everyday tasks) will transform considerably due to artificial intelligence algorithms.

There are a few rare cases where AI can make medical decisions without requiring human intervention. For instance, in 2018, IDx Technology in Coralville, Iowa, developed an algorithm that can analyze retinal images to detect diabetic retinopathy with 87 percent accuracy. The FDA approved this algorithm as the first of its kind that does not require a physician to interpret the image. According to IDx’s CEO – the company has taken on legal responsibility for any medical errors since no physician is involved in the decision-making process.

AI algorithms are expected to assist doctors rather than replace them in the near future. This is particularly useful for physicians working in developing countries where there may be a shortage of medical equipment and trained radiologists who can interpret scans. As medicine becomes more specialized and dependent on image analysis, the gap between the standard of care in wealthier and poorer regions is growing. Using an AI algorithm can be a cost-effective way to bridge this gap, and the algorithm can even be run on a mobile phone.

Researchers are working on a tool that enables doctors to capture x-ray film images with their cell phones (rather than relying on digital scans commonly used in wealthier countries), and then use an algorithm to detect issues like tuberculosis in the photos. He emphasizes that the tool is not meant to replace anyone, as many developing nations do not have radiologists in the first place. Instead, the aim is to provide non-radiologists with expertise by augmenting their skills with the tool.

Reviewing medical records in order to determine if a patient actually needs a scan is another short-term application of AI in the health sector. In fact, overuse of imaging is a concern for many medical economists, with over 80 million CT scans performed annually in the United States alone. Although imaging data is useful for training AI algorithms, scans are expensive and can subject patients to excessive radiation. In the future, algorithms may be able to analyze images while the patient is still in the scanner. Furthermore, algorithms could also predict the final outcome. This would lead to obtaining an accurate image with a reduction in terms of time and radiation exposure.

Finally, the most valuable role of AI is to act as a collaborative partner while doctors address medical issues that cannot be detected or solved by doctors alone.